Technical Architecture

Built with production-grade RAG pipeline featuring hybrid retrieval, cross-encoder reranking, and cloud-native deployment on AWS infrastructure.

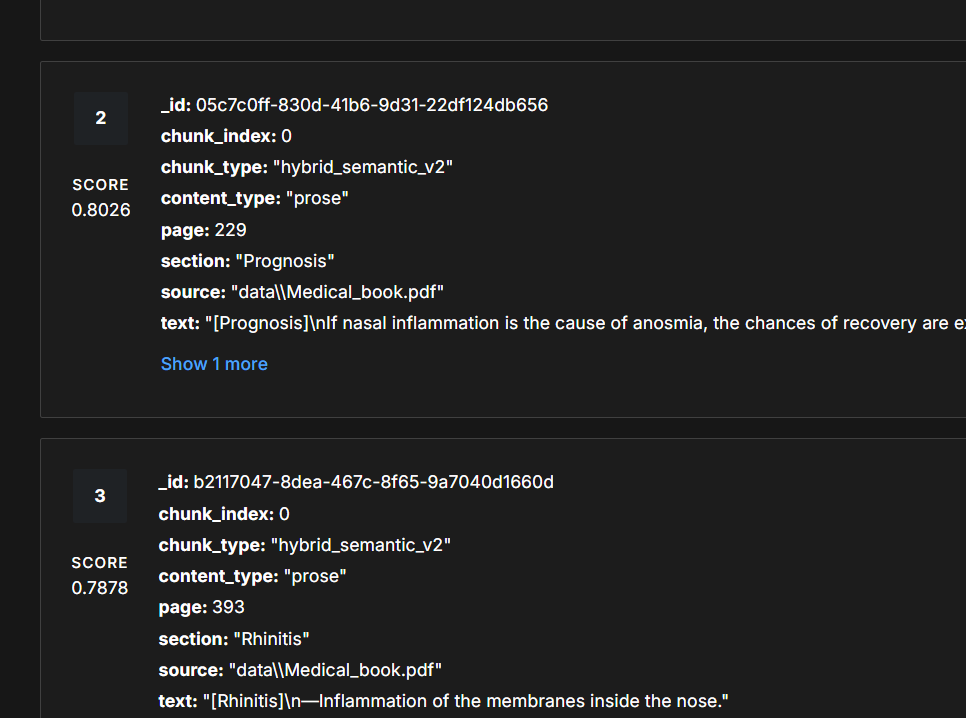

RAG-Powered Accuracy.

92% answer relevancy with source-grounded medical responses.

Hybrid Retrieval Pipeline

MMR + BM25 ensemble with cross-encoder reranking for precision.

AWS Cloud Deployment

Docker containers on EC2 with GitHub Actions CI/CD pipeline.

Vector Search with Pinecone

384-dim embeddings for semantic medical document retrieval.

Llama 3.3 70B Generation

Context-only LLM responses with 88.7% faithfulness score.

Comprehensive Testing Suite

153 tests covering unit, integration, security, and performance.

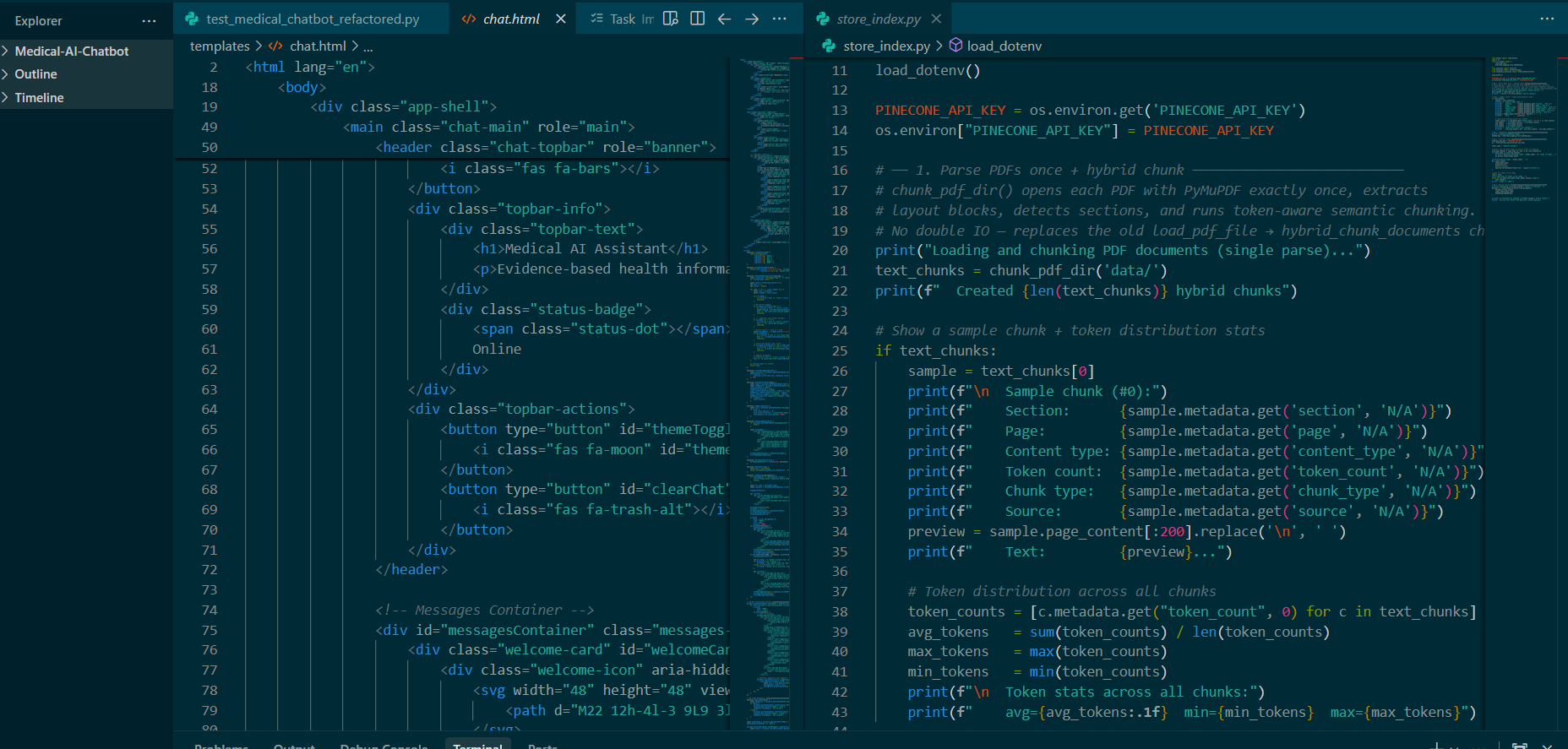

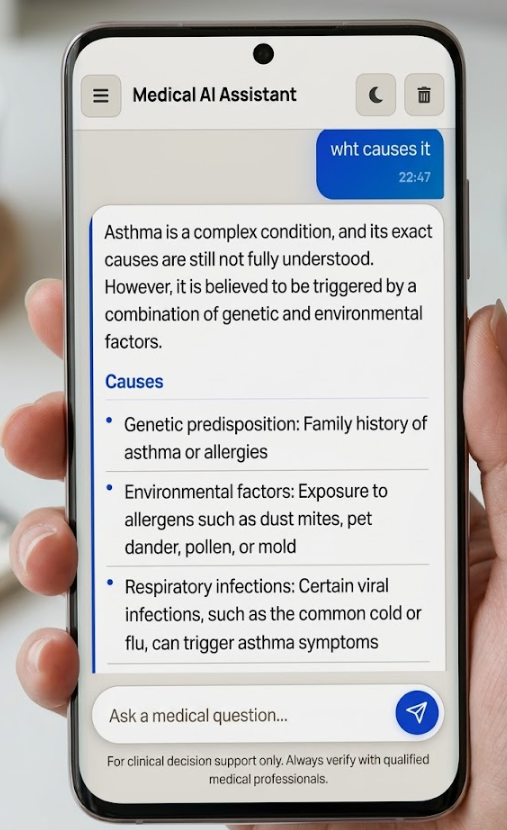

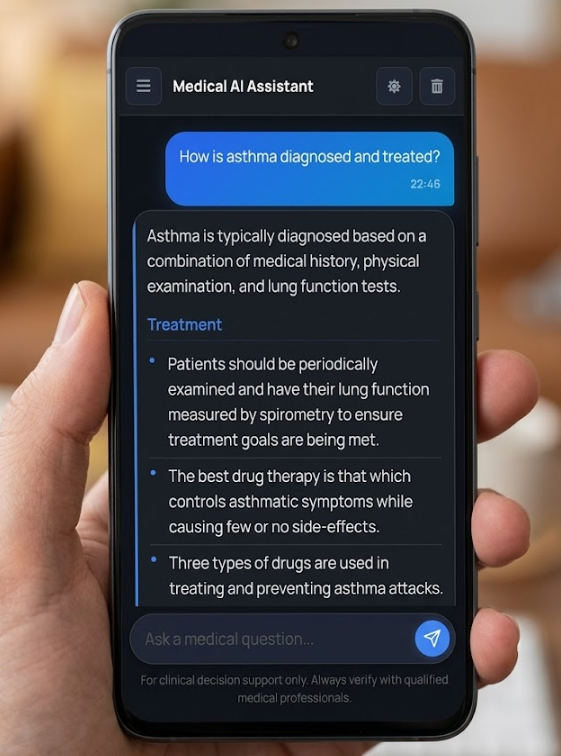

Intelligent Medical Assistant

Beautiful, responsive interface with light and dark modes. Get evidence-based medical information with source citations.

Hybrid Retrieval Pipeline

Production-grade RAG architecture combining multiple retrieval strategies for maximum accuracy and relevance.

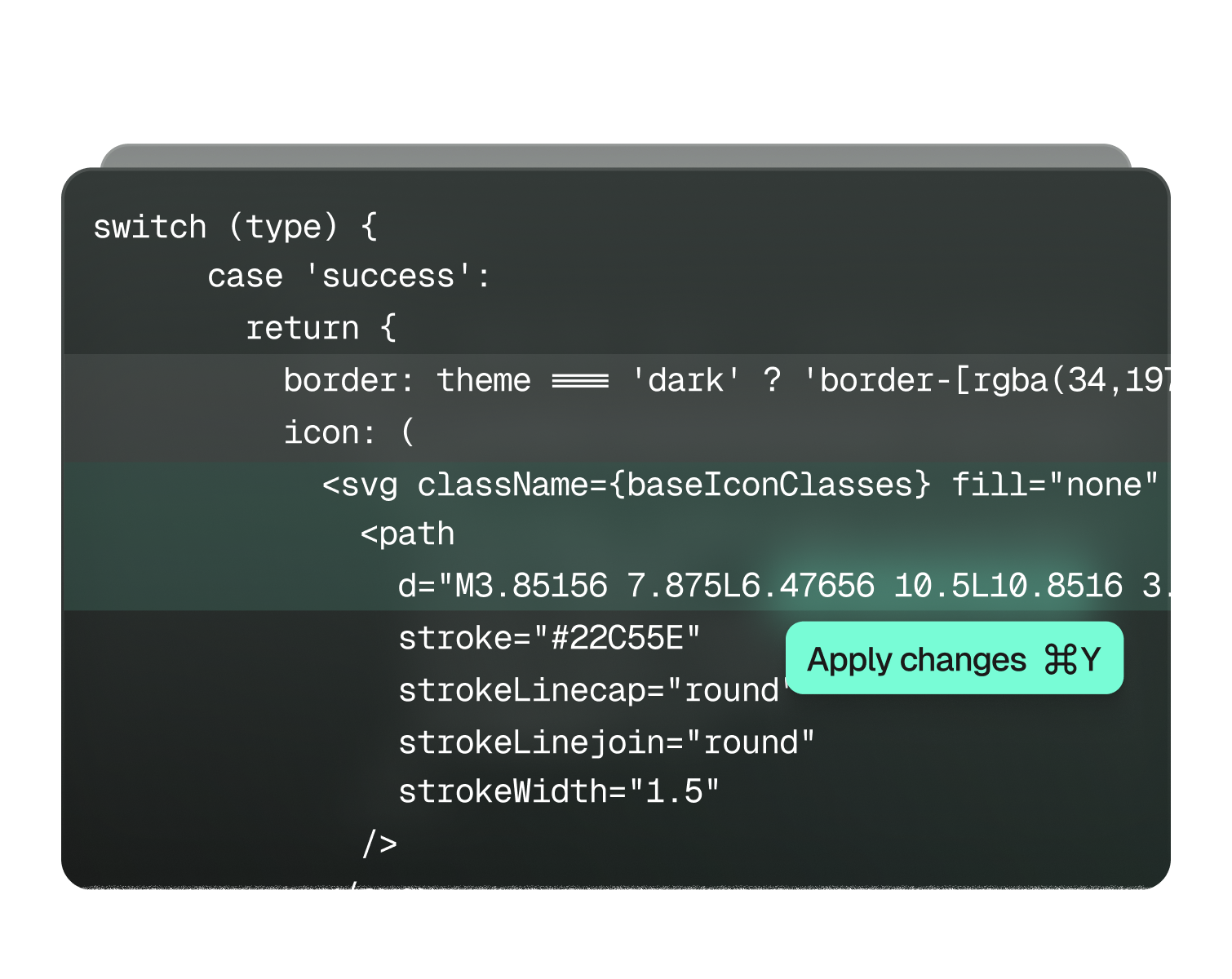

View on GitHubQuery Rewriting

Llama 3.1 8B resolves pronouns and expands medical terminology for precise retrieval.

# Pronoun Resolution "What are its side effects?" → "What are the side effects of metformin?"

Hybrid Retrieval

MMR (60%) + BM25 (40%) ensemble combines semantic understanding with keyword matching.

ensemble_retriever = EnsembleRetriever( retrievers=[mmr_retriever, bm25_retriever], weights=[0.6, 0.4] )

Cross-Encoder Reranking

ms-marco-MiniLM-L-6-v2 scores query-document pairs for final relevance ranking.

reranker = CrossEncoder( "cross-encoder/ms-marco-MiniLM-L-6-v2" ) top_docs = reranker.rerank(query, docs, top_n=8)

Context-Only Generation

Llama 3.3 70B generates responses strictly grounded in retrieved context.

system_prompt = """Answer ONLY from the provided context. If information is not in the context, say 'I don't have enough information to answer that.'"""

Context-Aware Chunking

Optimized document segmentation preserves semantic coherence for medical content.

100-token overlap ensures context continuity

Session Memory

Maintains last 10 exchanges (20 messages) for pronoun resolution only.

Temperature Control

Set to 0 for deterministic, factual medical responses without creativity.

Hallucination Prevention

Strict context-only mode reduces hallucinations from 35% to 5%.

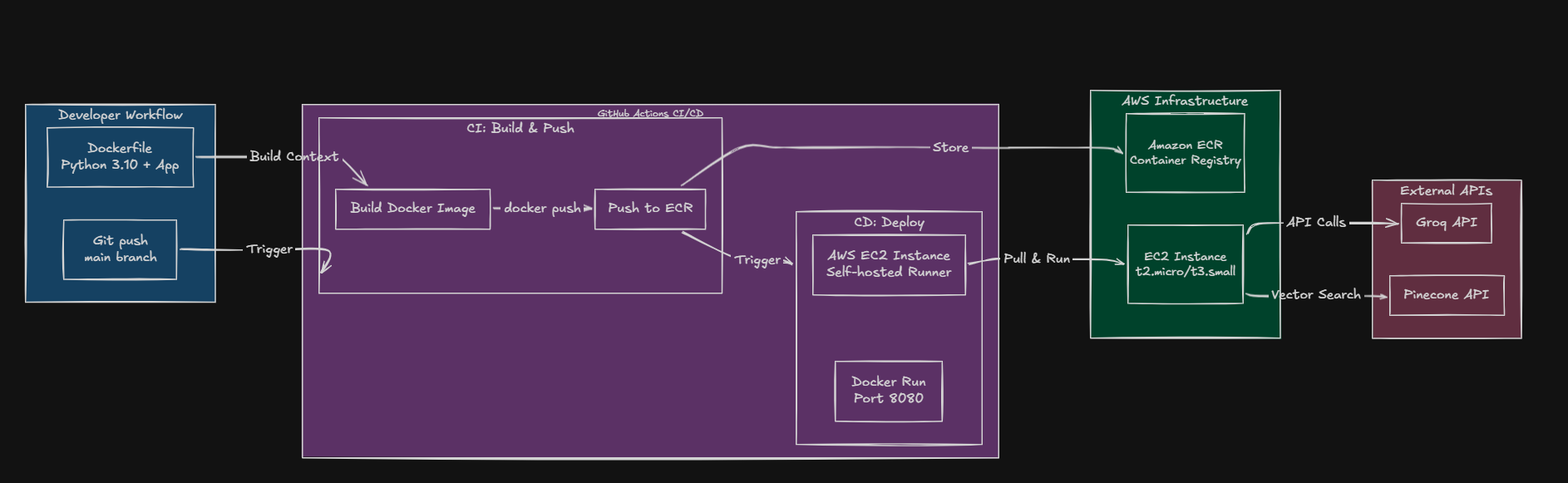

System Architecture

Production-grade RAG pipeline with hybrid retrieval, cross-encoder reranking, and cloud-native deployment

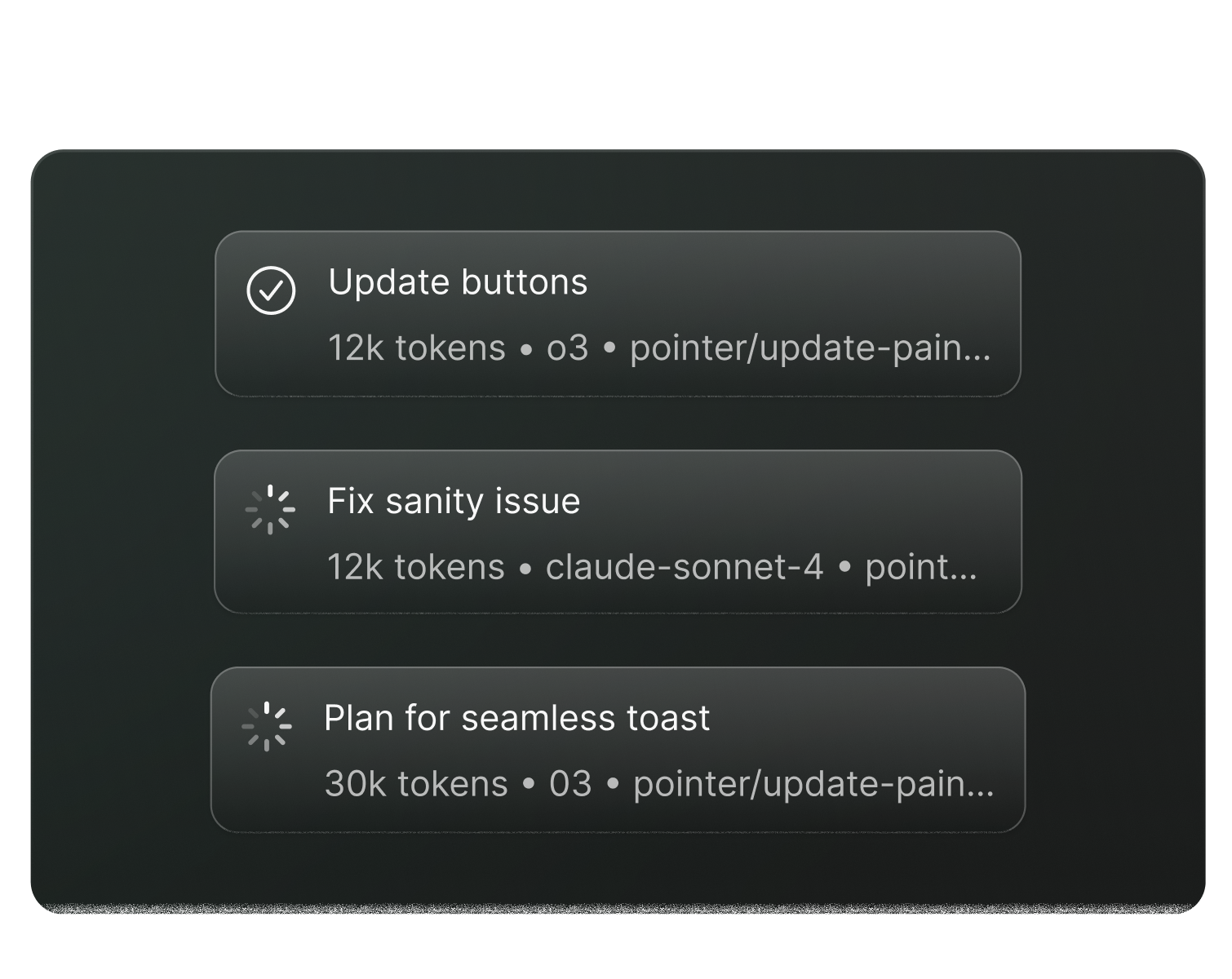

CI/CD Pipeline Architecture

GitHub Actions with Docker, ECR, and EC2 deployment

RAGAS Evaluation Metrics

RAG Pipeline Flow

Query Rewrite

Llama 3.1 8B resolves pronouns and expands queries

Hybrid Retrieval

MMR + BM25 ensemble (0.6/0.4 weights)

Cross-Encoder Rerank

ms-marco-MiniLM-L-6-v2 selects top-8 docs

Response Generation

Llama 3.3 70B with context-only mode

Technology Stack

Key Configuration Parameters

Technical Documentation

Comprehensive documentation covering architecture, testing, deployment, and evaluation metrics

Software Requirements Specification

IEEE 830-1998 compliant SRS document covering functional and non-functional requirements, system architecture, and use cases.

RAG Architecture

Complete documentation of the Retrieval-Augmented Generation pipeline including document indexing, query processing, and response generation.

CI/CD Pipeline

GitHub Actions workflow with Docker containerization, Amazon ECR registry, and automated EC2 deployment.

RAGAS Evaluation Report

Comprehensive evaluation using the RAGAS framework measuring faithfulness, relevancy, precision, and recall metrics.

Testing Documentation

Complete test suite documentation covering unit, integration, security, performance, and system workflow tests.

Security Testing

Security vulnerability testing including XSS prevention, SQL injection protection, and API key security validation.

Project Highlights

Problem Solved

- •Information overload in medical queries reduced through curated retrieval

- •Hallucination rate reduced from 35% to 5% using RAG architecture

- •Context loss in conversations handled via pronoun resolution

- •Source attribution enables response verification

Key Achievements

- •92% accuracy vs 65% for non-RAG systems (+27% improvement)

- •100% SLA compliance with all queries under 5 seconds

- •Production-ready with Gunicorn, health endpoint, and Docker

- •Automated CI/CD with GitHub Actions and AWS deployment

Frequently Asked Questions

Everything you need to know about Medi-Query's RAG architecture and technical implementation